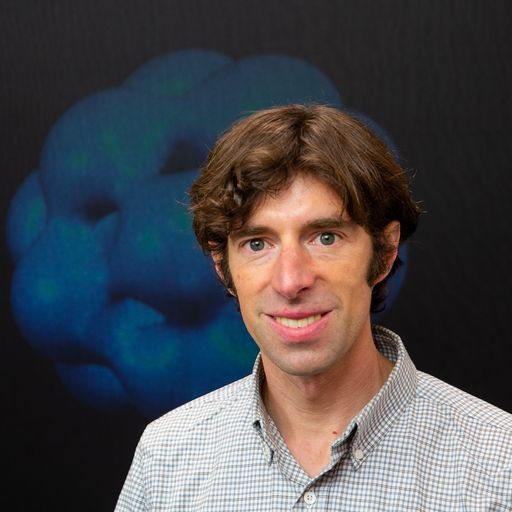

Meet the team Q&A: Marika Taylor

In this Erlangen Hub Q&A we spoke to Marika Taylor, Co-Investigator and Theme C Deputy Lead. Marika is a Professor of Mathematics, Physics and AI at the University of Southampton. She trained in theoretical physics under Stephen Hawking, and is currently interested in geometric ML for fundamental physics applications and physics-inspired methods for ML. Marika was a Turing Institute Fellow and recipient of the “Dutch ERC” Vidi. She has a long track record with start-ups, including in encryption and fintech.

Can you share a bit about your background and your current research focus?

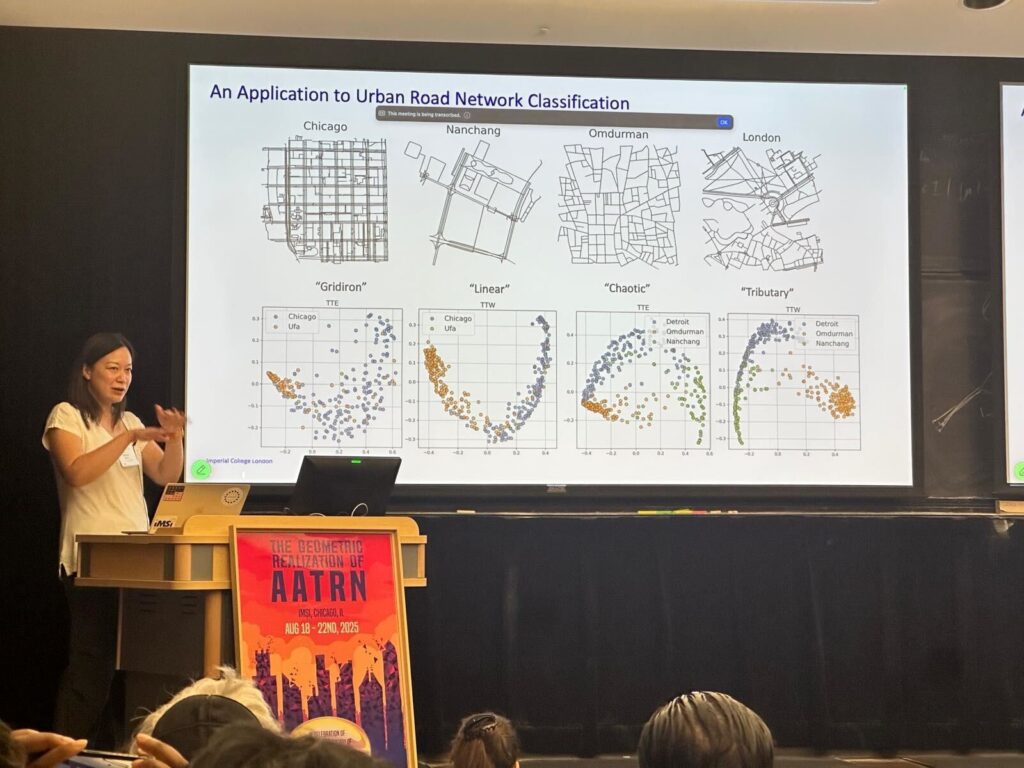

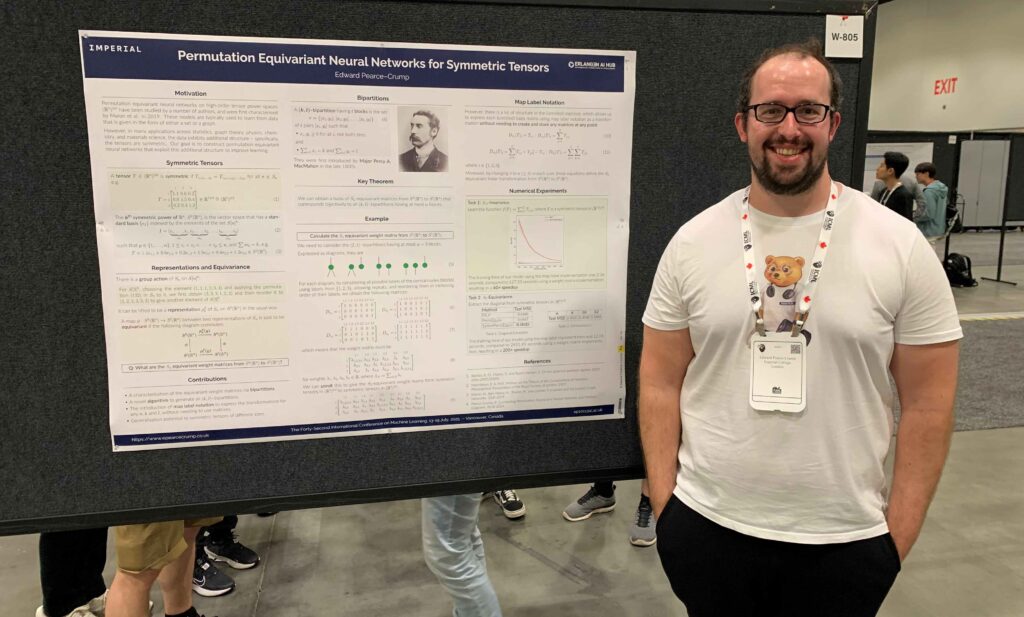

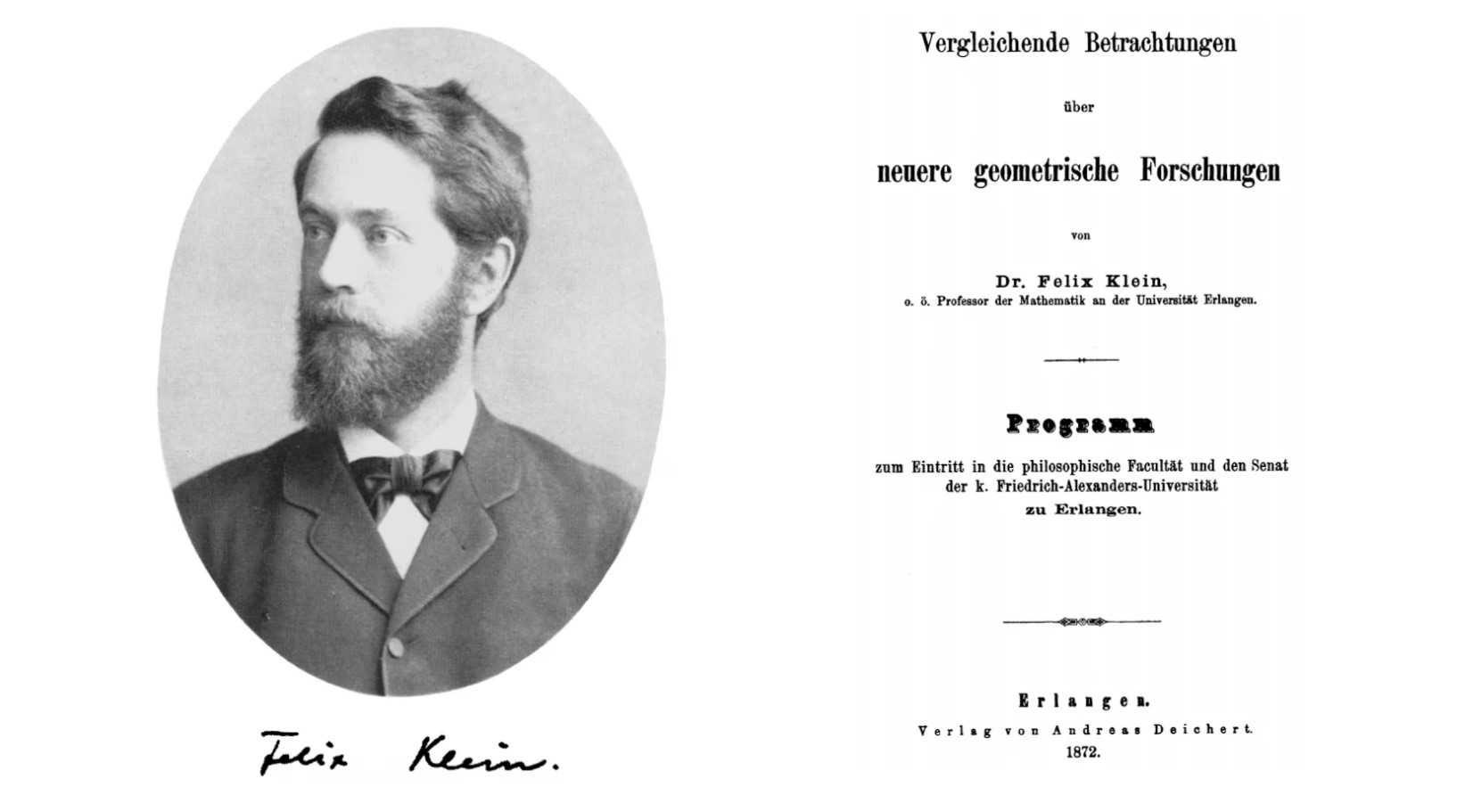

My main research background is in mathematical and theoretical physics – particularly string theory, quantum theory and gravity. In parallel with this work in fundamental science, I have always been involved in mathematical modeling for real world problems, particularly finance, and have used neural nets for many years in that context. In recent years I’ve seen a convergence between my fundamental science research and my applied work: concepts from my areas of physics are being used within AI, while AI is increasingly being applied within fundamental physics too. A nice example would be graph neural networks in non-Euclidean geometry. Non-Euclidean geometry underpins our understanding of Einstein’s theory of general relativity (gravity). Many of the physical insights obtained from studying particularly geometries for gravity lead to insights into GNNs embedded into such geometries. Another example would be around symmetries. Physicists always build in their understanding of the underlying symmetries (exact or approximate) into their modelling of a system. One can similarly build symmetry equivariance into neural networks – for example, if you are classifying images of 3d objects and are agnostic about the orientation of the objects, then a network with rotational equivariance built into it will be more efficient in classification. My group is currently exploring more general symmetry equivariant networks, drawing from physics insights; this enables us to reduce substantially the number of parameters that need to be learned, and also to understand conceptually patterns found in previous algorithms. We are also interested in using physics understanding of time dependent systems to develop spiking neural networks; the latter are nature inspired, in that neurons only fire when a threshold is met, making them much more energy efficient.

What inspired you to pursue this area?

Throughout my career I have always worked on the frontiers of fundamental science. String theory is a “theory of everything”. It uses concepts from right across mathematics and also leads to new insights and ideas in mathematics – topological quantum field theory, for which Ed Witten won the Fields Medal, is a notable example. In parallel I’ve always enjoyed using the breadth of my knowledge in mathematical sciences for real world applications and I’ve often found that I get new insights into fundamental science from the applied work I’ve done. Over the last few years I’ve gradually moved more and more into AI, for both fundamental science and applied work, because there are so many exciting developments.

Which themes are you connected to within the Erlangen AI Hub and how does your work within the hub intersect with your research background?

The main theme that I am connected with is “Understanding Learning”, but I link with all the themes. Much of what I do relates to understanding conceptually hidden structures in data, and how geometry and topology can be used to characterize these. My physics insights into geometry also facilitate relating geometric and topological insights to real world phenomena.

What attracted you to the Erlangen AI Hub and what do you hope to see it achieve?

The hub is exploring the mathematical foundations of intelligence – this is essential to develop better, more efficient and safer models, which in turn will allow us to use AI in more contexts.

What’s been the most surprising or exciting finding in your work so far?

I don’t think that I would have predicted ten years ago that my two parallel streams of research would become so closely connected, with physics giving insights into developing AI and AI started to be used more in physics. (For AI to really be adopted more widely in physics, we will need robustness and accuracy.)

What challenges have you faced in your research, and how did you overcome them?

I like to take on research problems that are quite open ended and conceptually challenging. Inevitably that means that at times I get stuck or can’t quite see where to go next! Then I take a break, think about something else, and also talk to others, to get new ideas on where to go next.

What advice would you give to someone just starting out in your field?

I would advise somebody to follow their interests, and see where these take them!